FILO: Difference between revisions

Linux junkie (talk | contribs) No edit summary |

No edit summary |

||

| (44 intermediate revisions by 14 users not shown) | |||

| Line 1: | Line 1: | ||

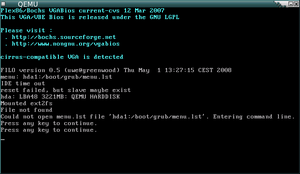

[[Image:Qemu filo.png|thumb|right|FILO trying to load menu.lst.]] | |||

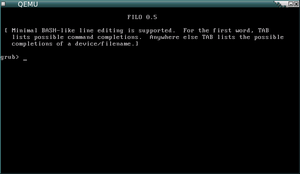

[[Image:Qemu filo prompt.png|thumb|right|FILO prompt.]] | |||

'''FILO''' is a bootloader which loads boot images from a local filesystem, | '''FILO''' is a bootloader which loads boot images from a local filesystem, | ||

without help from legacy BIOS services. | without help from legacy BIOS services. | ||

| Line 6: | Line 9: | ||

== Download FILO == | == Download FILO == | ||

Download the latest version of FILO from | Download the latest version of FILO from Git with | ||

$ | $ git clone http://review.coreboot.org/p/filo.git | ||

You can also browse the source code online at | You can also browse the source code online at | ||

http:// | http://review.coreboot.org/gitweb?p=filo.git | ||

== Features == | == Features == | ||

* Supported boot devices: IDE hard disk, SATA hard disk, CD-ROM, and system memory (ROM) | * Supported boot devices: IDE hard disk, SATA hard disk, CD-ROM, USB Mass Storage, and system memory (ROM) | ||

* Supported filesystems: ext2, fat, jfs, minix, reiserfs, xfs, and iso9660 | * Supported filesystems: ext2, fat, jfs, minix, reiserfs, xfs, and iso9660 | ||

* Supported image formats: ELF and [b]zImage (a.k.a. /vmlinuz) | * Supported image formats: ELF and [b]zImage (a.k.a. /vmlinuz) | ||

| Line 25: | Line 28: | ||

* Auxiliary tool to compute checksum of ELF boot images | * Auxiliary tool to compute checksum of ELF boot images | ||

* Full 32-bit code, no BIOS calls | * Full 32-bit code, no BIOS calls | ||

* uses [[libpayload]] | |||

== Requirements == | == Requirements == | ||

| Line 32: | Line 36: | ||

for more information. | for more information. | ||

Recent version of GNU toolchain is required to build. | |||

Recent version of GNU toolchain is required to build. | |||

We have tested with Debian/woody (gcc 2.95.4, binutils 2.12.90.0.1, | We have tested with Debian/woody (gcc 2.95.4, binutils 2.12.90.0.1, | ||

make 3.79.1), Debian/sid (gcc 3.3.2, binutils 2.14.90.0.6, | make 3.79.1), Debian/sid (gcc 3.3.2, binutils 2.14.90.0.6, | ||

make 3.80) and different versions of SUSE Linux from 9.0 to | make 3.80) and different versions of SUSE Linux from 9.0 to 11.0. | ||

FILO will use the coreboot crossgcc if you have it built and it can be found. | |||

FILO uses coreboot's '''libpayload'''. It is easiest to locate and build FILO in the '''coreboot/payloads''' directory. | |||

== Building on 64-bit OS specifics == | |||

If you will be building FILO on AMD64 platform for Debian install the '''gcc-multilib''' package. | |||

x64/AMD64 machines work fine when compiling FILO in 32-bit mode. | |||

(coreboot uses 32-bit mode and Linux kernel does the transition to 64-bit mode) | |||

== | == Preparation == | ||

Before you can build FILO, you have to build libpayload. If your filo directory is located inside the coreboot/payloads directory, you don't have to do anything special. If for some reason you want to compile FILO of the coreboot/payloads directory, you will need to tell the makefile where libpayload is. Open filo/Makefile in your favorite text editor and change this line | |||

= | export LIBCONFIG_PATH := $(src)/../libpayload | ||

to match the location of the libpayload directory on your system: | |||

export LIBCONFIG_PATH := /home/YOUR_USER_NAME/PATH_TO_COREBOOT/payloads/libpayload | |||

== Configuration == | |||

==== | |||

Configure FILO (and libpayload) using the Kconfig interface: | |||

$ make menuconfig | |||

This will run menuconfig twice -- the first time for libpayload, the second time for FILO. | |||

== Building == | |||

Then running make will build filo.elf, the ELF boot image of FILO. | |||

$ make | |||

If you are compiling on an AMD64 platform and compiler complains, instead of "make" you need to write | |||

$ make CC="gcc -m32" LD="ld -b elf32-i386" HOSTCC="gcc" AS="as --32" | |||

Use '''build/filo.elf''' as your payload of coreboot, or a boot image for | |||

[[Etherboot]]. | |||

Alternatively, you can build libpayload and FILO in one go using the build.sh script, with the drawback that you'll get the default options for both of them: | |||

$ ./build.sh | |||

Here is the short listing how to build FILO from git | |||

cd coreboot/payloads | |||

git clone http://review.coreboot.org/p/filo.git | |||

cd filo | |||

make config | |||

make | |||

== Credits == | |||

* This software was originally developed by SONE Takeshi <ts1@tsn.or.jp> | |||

* It has been significantly enhanced and is now maintained by [mailto:stepan@coresystems.de Stefan Reinauer]. | |||

* It uses libpayload from Uwe Hermann and Jordan Crouse | |||

== Troubleshooting == | |||

If you experience trouble compiling or using FILO, please report with a build log or detailed error description to the [[Mailinglist|coreboot mailing list]]. | |||

== | == Notes == | ||

=== CD-ROM Booting === | |||

To boot a CD-ROM or DVD you only need to specify the drive '''without a partition number'''. For example to boot to the primary drive on the secondary IDE channel you would use '''hdc''' and not '''hdc1''' in FILO. | |||

=== Grub-like Interface === | |||

If you are using FILO with '''CONFIG_USE_GRUB''', and want to boot to your Linux install disk you have to do a mixture of GRUB and FILO commands. | |||

Like GRUB you have to append a kernel (and parameters), then an initrd, and give a boot command. | |||

Like FILO you have to give absolute paths. | |||

Example to boot to a GeeXboX install CD-ROM: | |||

hdc:/ | filo> kernel hdc:/GEEXBOX/boot/vmlinuz root=/dev/ram0 rw init=linuxrc boot=cdrom installator | ||

Press <ENTER> | |||

filo> initrd hdc:/GEEXBOX/boot/initrd.gz | |||

Press <ENTER> | |||

filo> boot | |||

Press <ENTER> | |||

Your system will now boot right into the Linux install. | |||

=== NVRAM Parsing === | |||

FILO parses the following NVRAM variables: | |||

* 'boot_devices' can contain a list of boot devices seperated by semicolons. FILO will try to load filo.lst / menu.lst from any of these devices. | |||

Example how to set: | |||

nvramtool -w "boot_devices=hda1:/boot/filo;hdc:" | |||

= Contributing = | |||

To be able to contribute you will need a Gerrit account. See [[Git]] on how to get one and how to push new code to the repository. The instructions there are valid also for FILO, just change '''coreboot''' to '''filo''' in ''directory'' names etc. (not hostnames). | |||

Latest revision as of 13:44, 30 August 2013

FILO is a bootloader which loads boot images from a local filesystem, without help from legacy BIOS services.

Expected usage is to flash it into the BIOS ROM together with coreboot.

Download FILO

Download the latest version of FILO from Git with

$ git clone http://review.coreboot.org/p/filo.git

You can also browse the source code online at http://review.coreboot.org/gitweb?p=filo.git

Features

- Supported boot devices: IDE hard disk, SATA hard disk, CD-ROM, USB Mass Storage, and system memory (ROM)

- Supported filesystems: ext2, fat, jfs, minix, reiserfs, xfs, and iso9660

- Supported image formats: ELF and [b]zImage (a.k.a. /vmlinuz)

- Supports boot disk image of El Torito bootable CD-ROM. "hdc1" means the boot disk image of the CD-ROM at hdc.

- Supports loading image from raw device with user-specified offset

- Console on VGA + keyboard, serial port, or both

- Line editing with ^H, ^W and ^U keys to type arbitrary filename to boot

- Full support for the ELF Boot Proposal (where is it btw, Eric)

- Auxiliary tool to compute checksum of ELF boot images

- Full 32-bit code, no BIOS calls

- uses libpayload

Requirements

Only the x86 (x64) architecture is currently supported. Some efforts have been made to get FILO running on PPC. Contact the coreboot mailinglist for more information.

Recent version of GNU toolchain is required to build.

We have tested with Debian/woody (gcc 2.95.4, binutils 2.12.90.0.1, make 3.79.1), Debian/sid (gcc 3.3.2, binutils 2.14.90.0.6, make 3.80) and different versions of SUSE Linux from 9.0 to 11.0.

FILO will use the coreboot crossgcc if you have it built and it can be found.

FILO uses coreboot's libpayload. It is easiest to locate and build FILO in the coreboot/payloads directory.

Building on 64-bit OS specifics

If you will be building FILO on AMD64 platform for Debian install the gcc-multilib package.

x64/AMD64 machines work fine when compiling FILO in 32-bit mode. (coreboot uses 32-bit mode and Linux kernel does the transition to 64-bit mode)

Preparation

Before you can build FILO, you have to build libpayload. If your filo directory is located inside the coreboot/payloads directory, you don't have to do anything special. If for some reason you want to compile FILO of the coreboot/payloads directory, you will need to tell the makefile where libpayload is. Open filo/Makefile in your favorite text editor and change this line

export LIBCONFIG_PATH := $(src)/../libpayload

to match the location of the libpayload directory on your system:

export LIBCONFIG_PATH := /home/YOUR_USER_NAME/PATH_TO_COREBOOT/payloads/libpayload

Configuration

Configure FILO (and libpayload) using the Kconfig interface:

$ make menuconfig

This will run menuconfig twice -- the first time for libpayload, the second time for FILO.

Building

Then running make will build filo.elf, the ELF boot image of FILO.

$ make

If you are compiling on an AMD64 platform and compiler complains, instead of "make" you need to write

$ make CC="gcc -m32" LD="ld -b elf32-i386" HOSTCC="gcc" AS="as --32"

Use build/filo.elf as your payload of coreboot, or a boot image for Etherboot.

Alternatively, you can build libpayload and FILO in one go using the build.sh script, with the drawback that you'll get the default options for both of them:

$ ./build.sh

Here is the short listing how to build FILO from git

cd coreboot/payloads git clone http://review.coreboot.org/p/filo.git cd filo make config make

Credits

- This software was originally developed by SONE Takeshi <ts1@tsn.or.jp>

- It has been significantly enhanced and is now maintained by Stefan Reinauer.

- It uses libpayload from Uwe Hermann and Jordan Crouse

Troubleshooting

If you experience trouble compiling or using FILO, please report with a build log or detailed error description to the coreboot mailing list.

Notes

CD-ROM Booting

To boot a CD-ROM or DVD you only need to specify the drive without a partition number. For example to boot to the primary drive on the secondary IDE channel you would use hdc and not hdc1 in FILO.

Grub-like Interface

If you are using FILO with CONFIG_USE_GRUB, and want to boot to your Linux install disk you have to do a mixture of GRUB and FILO commands.

Like GRUB you have to append a kernel (and parameters), then an initrd, and give a boot command. Like FILO you have to give absolute paths.

Example to boot to a GeeXboX install CD-ROM:

filo> kernel hdc:/GEEXBOX/boot/vmlinuz root=/dev/ram0 rw init=linuxrc boot=cdrom installator

Press <ENTER>

filo> initrd hdc:/GEEXBOX/boot/initrd.gz

Press <ENTER>

filo> boot

Press <ENTER>

Your system will now boot right into the Linux install.

NVRAM Parsing

FILO parses the following NVRAM variables:

- 'boot_devices' can contain a list of boot devices seperated by semicolons. FILO will try to load filo.lst / menu.lst from any of these devices.

Example how to set:

nvramtool -w "boot_devices=hda1:/boot/filo;hdc:"

Contributing

To be able to contribute you will need a Gerrit account. See Git on how to get one and how to push new code to the repository. The instructions there are valid also for FILO, just change coreboot to filo in directory names etc. (not hostnames).